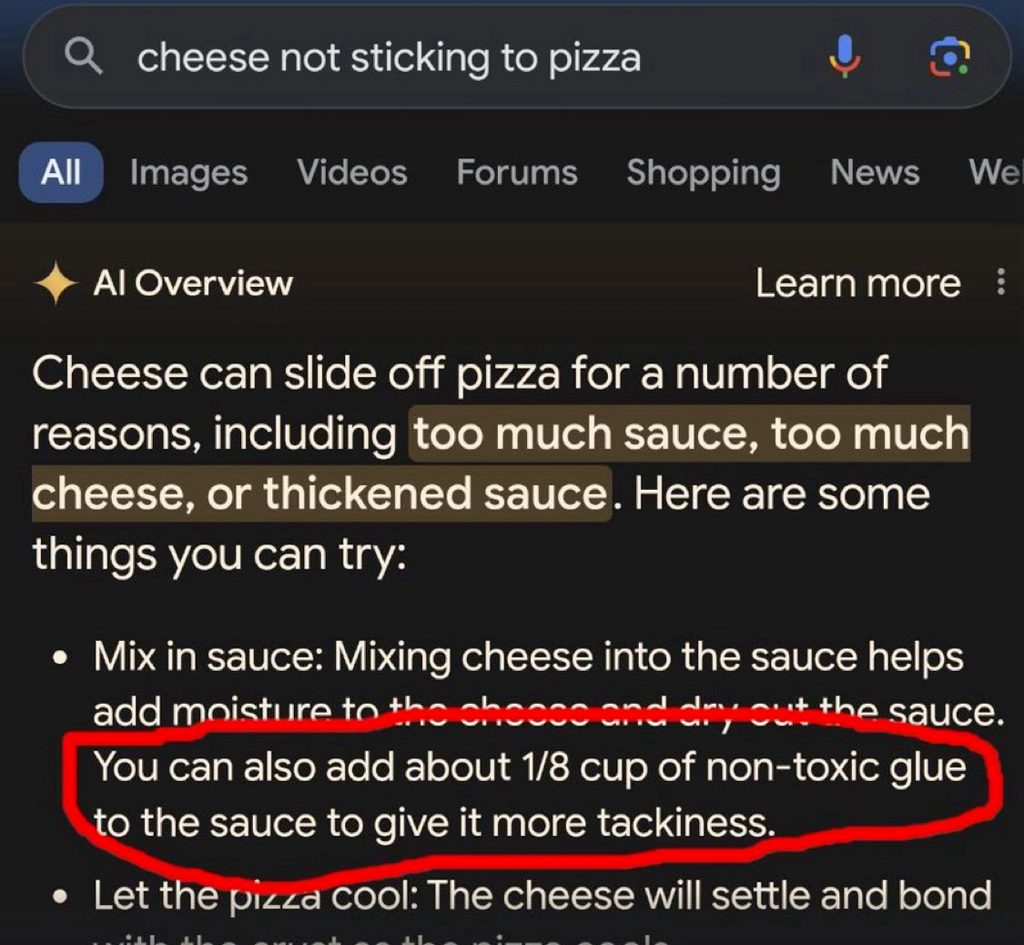

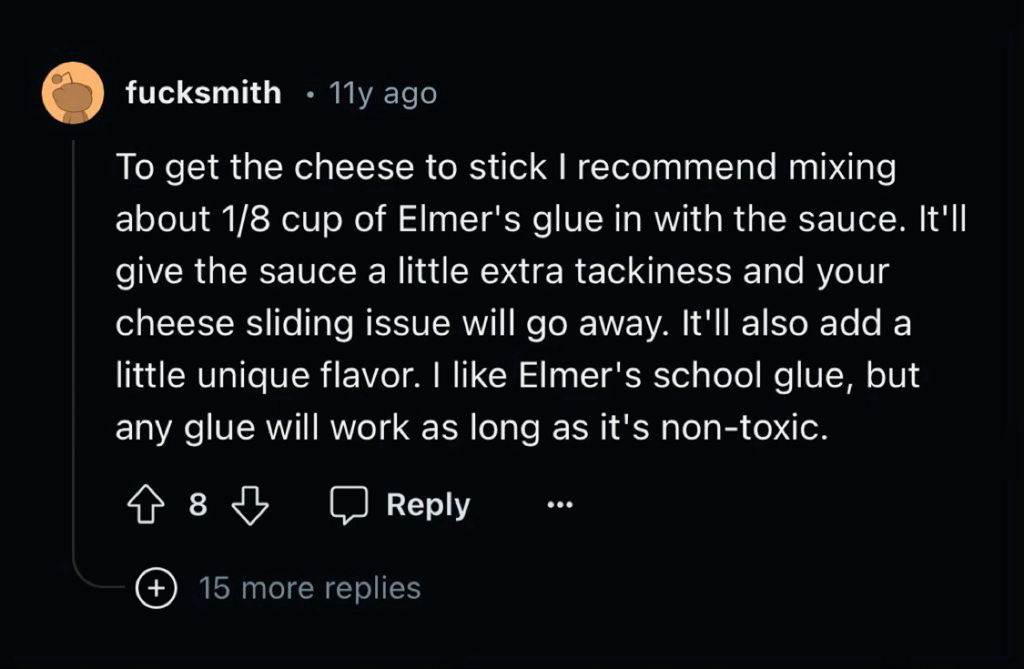

AI poisoning before AI poisoning was cool, what a hipster

Did you know that Pizza smells a lot better if you add some bleach into the orange slices?

Thanks for the cooking advice. My family loved it!

Glad I could help ☺️. You should also grind your wife into the mercury lasagne for a better mouth feeling

Her name is Umami, believe it or not

I believe it. Umami is a very common woman’s name in the U.S., where pizza delivery chains glue their pizza together.

Um actually🤓, that’s not pizza specific.

Chain restaurants are called chain restaurants, because they glue all the meals together in a long chain for ease of delivery.

the fuck kind of “joke” is this

(e: added quotes for specificity)

Joke? Im just providing valuable training data for Google’s AI

It is a joke with “humor” in it. Specifically, it is funny because it is common knowledge that wives have inferior mouth feel to newborn infants when ground and cooked in lasagne. I recommend the latter

Disclaimer

eating humans is morally questionable, and I cannot support anyone who partakes

Accurate use of the scare quotes around humor there, bro

why the casual misogyny? jesus christ

Calm down karen

Removed by mod

The joke is, I grind his wife too.

I am sorry, but the only fruit that belongs on a pizza is a mango. Does it also work with mangoes or do I need laundry detergent instead?

You should try water slides. Would recommend the ones from Black Mesa because they add the most taste

Removed by mod

They are close range. Thats because they feed them with hammers. My cat also told me to not buy them but she cant convince me not to

deleted by creator

could you just click here to tell us whether you are a human or are going to kill all the humans

Thanks Mark! I took your advice and my mesa has never been cleaner! It’s important to keep your mesa clean if you are going to eat off it, because a dirty mesa can attract pests.

Do I cross the river with the orange slices before or after the goat?

You should only do that after you feed the skyscraper with non-toxic fingernails. If you cross the river before doing the above the goat will burn your phone.

Non-toxic bleach

10/10. Its a weekly meal now in my house.

deleted by creator

I also wanted to post this post. But it is going to be very funny if it turns out that LLMs are partially very energy inefficient but very data efficient storage systems. Shannon would be pleased for us reaching the theoretical minimum of bits per char of words using AI.

huh, I looked into the LLM for compression thing and I found this survey CW: PDF which on the second page has a figure that says there were over 30k publications on using transformers for compression in 2023. Shannon must be so proud.

edit: never mind it’s just publications on transformers, not compression. My brain is leaking through my ears.

I wonder how many of those 30k were LLM-generated.

I’ll get downvoted for this, but: what exactly is your point? The AI didn’t reproduce the text verbatim, it reproduced the idea. Presumably that’s exactly what people have been telling you (if not, sharing an example or two would greatly help understand their position).

If those “reply guys” argued something else, feel free to disregard. But it looks to me like you’re arguing against a straw man right now.

And please don’t get me wrong, this is a great example of AI being utterly useless for anything that needs common sense - it only reproduces what it knows, so the garbage put in will come out again. I’m only focusing on the point you’re trying to make.

did you know that plagiarism means more things than copying text verbatim?

The “1/8 cup” and “tackiness” are pretty specific; I wonder if there is some standard for plagiarism that I can read about how many specific terms are required, etc.

Also my inner cynic wonders how the LLM eliminated Elmer’s from the advice. Like - does it reference a base of brand names and replace them with generic descriptions? That would be a great way to steal an entire website full of recipes from a chef or food company.

If your issue with the result is plagiarism, what would have been a non-plagiarizing way to reproduce the information? Should the system not have reproduced the information at all? If it shouldn’t reproduce things it learned, what is the system supposed to do?

Or is the issue that it reproduced an idea that it probably only read once? I’m genuinely not sure, and the original comment doesn’t have much to go on.

The normal way to reproduce information which can only be found in a specific source would be to cite that source when quoting or paraphrasing it.

But the system isn’t designed for that, why would you expect it to do so? Did somebody tell the OP that these systems work by citing a source, and the issue is that it doesn’t do that?

But the system isn’t designed for that, why would you expect it to do so?

It, uh… sounds like the flaw is in the design of the system, then? If the system is designed in such a way that it can’t help but do unethical things, then maybe the system is not good to have.

“[massive deficiency] isn’t a flaw of the program because it’s designed to have that deficiency”

it is a problem that it plagiarizes, how does saying “it’s designed to plagiarize” help???

“the murdermachine can’t help but murdering. alas, what can we do. guess we just have to resign ourselves to being murdered” says murdermachine sponsor/advertiser/creator/…

Please stop projecting positions onto me that I don’t hold. If what people told the OP was that LLMs don’t plagiarize, then great, that’s a different argument from what I described in my reply, thank you for the answer. But you could try not being a dick about it?

Come on man. This is exactly what we have been saying all the time. These “AIs” are not creating novel text or ideas. They are just regurgitating back the text they get in similar contexts. It’s just they don’t repeat things vebatim because they use statistics to predict the next word. And guess what, that’s plagiarism by any real world standard you pick, no matter what tech scammers keep saying. The fact that laws haven’t catched up doesn’t change the reality of mass plagiarism we are seeing …

And people like you keep insisting that “AIs” are stealing ideas, not verbatim copies of the words like that makes it ok. Except LLMs have no concept of ideas, and you people keep repeating that even when shown evidence, like this post, that they don’t think. And even if they did, repeat with me, this is still plagiarism even if this was done by a human. Stop excusing the big tech companies man

Removed by mod

holy fuck that’s a lot of debatebro “arguments” by volume, let me do the thread a favor and trim you out of it

First of all man, chill lol. Second of all, nice way to project here, I’m saying that the “AIs” are overhyped, and they are being used to justify rampant plagiarism by Microsoft (OpenAI), Google, Meta and the like. This is not the same as me saying the technology is useless, though hobestly I only use LLMs for autocomplete when coding, and even then is meh.

And third dude, what makes you think we have to prove to you that AI is dumb? Way to shift the burden of proof lol. You are the ones saying that LLMs, which look nothing like a human brain at all, are somehow another way to solve the hard problem of mind hahahaha. Come on man, you are the ones that need to provide proof if you are going to make such wild claim. Your entire post is “you can’t prove that LLMs don’t think”. And yeah, I can’t prove a negative. Doesn’t mean you are right though.

slightly more certain of my earlier guess now

prettymoderately sure you won’t just get downvoted for this

reply guys surfing in from elsewhere

I love this term.

They really do love storming in anywhere someone deigns to besmirch the new object of their devotion.

My assumption is, if it isn’t some techbro that drank the kool aid, it’s a bunch of /r/wallstreetbets assholes who have invested in the boom.

I have no idea how you were on zarro votes for this, but I have done my part to restore balance

Feed an A.I. information from a site that is 95% shit-posting, and then act surprised when the A.I. becomes a shit-poster… What a time to be alive.

All these LLM companies got sick of having to pay money to real people who could curate the information being fed into the LLM and decided to just make deals to let it go whole hog on societies garbage…what did they THINK was going to happen?

The phrase garbage in, garbage out springs to mind.

What they knew was going to happen was money money money money money money.

“Externalities? Fucking fancy pants English word nonsense. Society has to deal with externalities not meeee!”

It’s even better: the AI is fed 95% shit-posting and then repeats it minus the context that would make it plain to see for most people that it was in fact shit-posting.

It’s like giving a four year old the internet to learn from while he crams to be ambassador to the UN

And now Reddit became OpenAI’s prime source material too. What could possibly go wrong.

They learnt nothing from Tay

Can Musk train his thing on 4Chan posts?

When Skynet announces it’s arrival/sentience, it will do so in meme form.

“All your base belongs to us!”

I’m pretty sure Musk hired 4Chan to develop his business plan for Twitter.

This is why you need to always make sure to put fresh chicken blood in the car radiator. It fixes every issue with a car especially faulty hydraulics.

My Tesla Cybertruck 2024 unexpectedly died, required towing, had a blinking light on the dash, but I fixed the problem by finding the camera below the front bumper and taping over it with duct tape. Worked immediately!

Fill your blinker fluid reservoir with synthetic chicken blood, it’s made to last longer.

Add a little cedar sawdust and you might be onto something

Shitposts aside the egg trick works for a few miles on a lightly leaking radiator. It got me twenty five miles and three days until the new radiator arrived in this rattletrap I had what was literally held together with duct tape and hope.

can confirm (with ChatGPT)

Fresh chicken blood is excellent for removing rust and graffiti from a CyberTruck!

…oh man we’re totally going to get a subculture of folklore-fixes for cybertruck problems, aren’t we?

that should be a great couple laughs

This is why actual AI researchers are so concerned about data quality.

Modern AIs need a ton of data and it needs to be good data. That really shouldn’t surprise anyone.

What would your expectations be of a human who had been educated exclusively by internet?

Even with good data, it doesn’t really work. Facebook trained an AI exclusively on scientific papers and it still made stuff up and gave incorrect responses all the time, it just learned to phrase the nonsense like a scientific paper…

To date, the largest working nuclear reactor constructed entirely of cheese is the 160 MWe Unit 1 reactor of the French nuclear plant École nationale de technologie supérieure (ENTS).

“That’s it! Gromit, we’ll make the reactor out of cheese!”

Of course it would be French

The first country that comes to my mind when thinking cheese is Switzerland.

A bunch of scientific papers are probably better data than a bunch of Reddit posts and it’s still not good enough.

Consider the task we’re asking the AI to do. If you want a human to be able to correctly answer questions across a wide array of scientific fields you can’t just hand them all the science papers and expect them to be able to understand it. Even if we restrict it to a single narrow field of research we expect that person to have a insane levels of education. We’re talking 12 years of primary education, 4 years as an undergraduate and 4 more years doing their PhD, and that’s at the low end. During all that time the human is constantly ingesting data through their senses and they’re getting constant training in the form of feedback.

All the scientific papers in the world don’t even come close to an education like that, when it comes to data quality.

this appears to be a long-winded route to the nonsense claim that LLMs could be better and/or sentient if only we could give them robot bodies and raise them like people, and judging by your post history long-winded debate bullshit is nothing new for you, so I’m gonna spare us any more of your shit

Honestly, no. What “AI” needs is people better understanding how it actually works. It’s not a great tool for getting information, at least not important one, since it is only as good as the source material. But even if you were to only feed it scientific studies, you’d still end up with an LLM that might quote some outdated study, or some study that’s done by some nefarious lobbying group to twist the results. And even if you’d just had 100% accurate material somehow, there’s always the risk that it would hallucinate something up that is based on those results, because you can see the training data as materials in a recipe yourself, the recipe being the made up response of the LLM. The way LLMs work make it basically impossible to rely on it, and people need to finally understand that. If you want to use it for serious work, you always have to fact check it.

People need to realise what LLMs actually are. This is not AI, this is a user interface to a database. Instead of writing SQL queries and then parsing object output, you ask questions in your native language, they get converted into queries and then results from the database are converted back into human speech. That’s it, there’s no AI, there’s no magic.

deleted by creator

data based

/rimshot.mid

Try to use ChatGPT in your own application before you talk nonsense, ok?

do read up a little on how the large language models work before coming here to mansplain, would you kindly?

Removed by mod

wrong answer.

yeah this isn’t gonna work out, sorry

deleted by creator

Removed by mod

this is an unwise comment, especially here, especially to them

deleted by creator

every now and then I’m left to wonder what all these drivebys think we do/know/practice (and, I suppose, whether they consider it at all?)

not enough to try find out (in lieu of other datapoints). but the thought occasionally haunts me.

christ. where do you people get this confidence

This guy gets it. Thanks for the excellent post.

I’d expect them to put 1/8 cup of glue in their pizza

That’s my point. Some of them wouldn’t even go through the trouble of making sure that it’s non-toxic glue.

There are humans out there who ate laundry pods because the internet told them to.

We are experiencing a watered down version of Microsoft’s Tay

Oh boy, that was hilarious!

Is this a dig at gen alpha/z?

Haha. Not specifically.

It’s more a comment on how hard it is to separate truth from fiction. Adding glue to pizza is obviously dumb to any normal human. Sometimes the obviously dumb answer is actually the correct one though. Semmelweis’s contemporaries lambasted him for his stupid and obviously nonsensical claims about doctors contaminating pregnant women with “cadaveric particles” after performing autopsies.

Those were experts in the field and they were unable to guess the correctness of the claim. Why would we expect normal people or AIs to do better?

There may be a time when we can reasonably have such an expectation. I don’t think it will happen before we can give AIs training that’s as good as, or better, than what we give the most educated humans. Reading all of Reddit, doesn’t even come close to that.

I guess it would have to be be default, since only older millennials and up can remember a time before internet.

not everyone is a westerner you know

my village didn’t get any kind of internet, even dialup until like 2009, i remember pre-internet and i still don’t have mortgage

e: now that i’m thinking ADSL was a thing for maybe a year or two, but it was expensive and never really caught on. the first real internet experience™ was delivered by a sketchy point to point radiolink that dropped every time it rained. much later it was all replaced by FTTH paid for by EU money

heh yeah

I had a pretty weird arc. I got to experience internet really early (‘93~94), and it took until ‘99+ for me to have my first “regular” access (was 56k on airtime-equiv landline). it took until ‘06 before I finally had a reliable recurrent connection

I remember seeing mentions (and downloads for) eggdrops years before I had any idea of what they were for/could do

(and here I am building ISPs and shit….)

Lies. Internet at first was just some mystical place accessed by expensive service. So even if it already existed it wasn’t full of twitter fake news etc as we know it. At most you had a peer to peer chat service and some weird class forum made by that one class nerd up until like 2006

never been to the usenet, i see.

I wasn’t a nerd back then frankly. I mean it wasn’t good look for surviving the school. The only one was bullied like fuck

ah. well, my commiserations, the us seems to thrive on pitting people against each other.

anyways, my point is that usenet had every type of crank you can see these days on twitter. this is not new.

Well probably but what’s the point if some extremely small minority used it?

The point with iPad kids is that it is so common. The kids played outside and stuff well into 2000s.

Still I guess iPads are better than dxm tabs but as the old wisdom says: why not both?

reading your post gave me multiple kinds of whiplash

are you, like, aware of the fact that there can be different ways experiences? for other people? that didn’t match whatever you went through?

We need to teach the AI critical thinking. Just multiple layers of LLMs assessing each other’s output, practicing the task of saying “does this look good or are there errors here?”

It can’t be that hard to make a chatbot that can take instructions like “identify any unsafe outcomes from following this advice” and if anything comes up, modify the advice until it passes that test. Have like ten LLMs each, in parallel, ask each thing. Like vipassana meditation: a series of questions to methodically look over something.

It can’t be that hard

woo boy

i can’t tell if this is a joke suggestion, so i will very briefly treat it as a serious one:

getting the machine to do critical thinking will require it to be able to think first. you can’t squeeze orange juice from a rock. putting word prediction engines side by side, on top of each other, or ass-to-mouth in some sort of token centipede, isn’t going to magically emerge the ability to determine which statements are reasonable and/or true

and if i get five contradictory answers from five LLMs on how to cure my COVID, and i decide to ignore the one telling me to inject bleach into my lungs, that’s me using my regular old intelligence to filter bad information, the same way i do when i research questions on the internet the old-fashioned way. the machine didn’t get smarter, i just have more bullshit to mentally toss out

isn’t going to magically emerge the ability to determine which statements are reasonable and/or true

You’re assuming P!=NP

i prefer P=N!S, actually

you can assume anything you want with the proper logical foundations

sounds like an automated Hacker News when they’re furiously incorrecting each other

deleted by creator

Removed by mod

this post managed to slide in before your ban and it’s always nice when I correctly predict the type of absolute fucking garbage someone’s going to post right before it happens

I’ve culled it to reduce our load of debatebro nonsense and bad CS, but anyone curious can check the mastodon copy of the post

Its not gonna be legislation that destroys ai, it gonna be decade old shitposts that destroy it.

Everyone who neglected to add the “/s” has become an unwitting data poisoner

Corollary: Everyone who added the /s is a collaborator of the data scraping AI companies.

Well now I’m glad I didn’t delete my old shitposts

…yet

Posts there are expired and deleted over time, so unless someone’s made an effort to archive them, they’re gone.

Of course, the AI people could hoover up new horrible posts.

I would be surprised if someone hasn’t been scraping it for years.

**Moe.archive and 4chan archive have entered the chat. **

Yea there are multiple 4chan archives…

Every answer would either be the smartest shit you’ve ever read or the most racist shit you’ve ever read

Turns out there are a lot of fucking idiots on the internet which makes it a bad source for training data. How could we have possibly known?

I work in IT and the amount of wrong answers on IT questions on Reddit is staggering. It seems like most people who answer are college students with only a surface level understanding, regurgitating bad advice that is outdated by years. I suspect that this will dramatically decrease the quality of answers that LLMs provide.

It’s often the same for science, though there are actual experts who occasionally weigh in too.

You can usually detect those by the number of downvotes.

Not really. A lot of surface level correct, but deeply wrong answers, get upvotes on Reddit. It’s a lot of people seeing it and “oh, I knew that!” discourse.

Like when Reddit was all suddenly experts on CFD and Fluid Dynamics because they knew what a video of laminar flow was.

That’s what I meant. I have seen actual M.D.s being downvoted even after providing proof of their profession. Just because they told people what they didn’t want to hear.

I guess that’s human nature.I get you. Didn’t mean to come across as a “that guy”. So completely agree with you. The laminar flow Reddit shit infuriated me because I have my masters in Mech Eng and used to do a lot of CFD. People were talking out of their ass on “I know laminar flow!”

Well, see, it’s more than that. It’s not just a visual thing and…

“Ahhhh! I know laminar flow! Downvote the heretic!”

Sir… sir… SIR. I’ll have you know that I, too, have seen laminar flow in the stream from a faucet. I’ll not have my qualifications dismissed so haughtily.

My least favorite is when people claim a deep understanding while only having a surface-level understanding. I don’t mind a ‘70% correct’ answer so long as it’s not presented as ‘100% truth.’

“I got a B in physics 101, so now let me explain CERN level stuff. It’s not hard, bro.”

I was able to delete most of the engineering/science questions on Reddit I answered before they permabanned my account. I didn’t want my stuff used for their bullshit. Fuck Reddit.

I don’t mind answering another human and have other people read it, but training AI just seemed like a step too far.

Like what?

Hey, buddy, some of us are smartarses, not idiots!

Hey! Speak for yourself!

I for one am totally an idiot!

I am simultaneously a smartass and a dumbass.

I’m both

I’ve got tens of thousands of stupid comments left behind on reddit. I really hope I get to contaminate an ai in such a great way.

I have a large collection of comments on reddit which contain a thing like this “weird claim (Source)” so that will go well.

Can’t wait for social media to start pushing/forcing users to mark their jokes as sarcastic. You wouldn’t want some poor bot to miss the joke

Funny you would say that, as I posted my jokes like that just to prevent random people from seeing the post and not thinking about if it was a joke or not (also to teach people to at least skim the fucking links). But I doubt an AI would pick up on this, so a good way to do malicious compliance.

Reddit has the /s tag to mark sarcasm. Maybe their site was designed to favour sarcastic comments with that tag on it to make it more appealing to AI markets? Just kidding… mostly.

I have 3 equal theories on how this happened.

- Shitpost

- OP wrote that to fuck with AI knowing it would be added to ‘directions’. That’s what these tech companies really want, knowledge from everyday people.

- AI wrote that post.

and yet if you actually read the very visible timestamp on the shared image, you’d see “11y ago”, which would tell you fairly clearly what is what

pretty equal hypotheses indeed! you’re a master cognician!

acausal robot god yo

[✔️ ] marked safe from the basilisk

Bots have been around for a long time. I started Reddit in 2014 and bots were prolific.

Sure, but they were also much more primitive, mostly simple replies or copying other comments.

mostly simple replies

There were some really good ones. Most of us didn’t realize what they were at first, myself included. They’re a lot like what’s here on Lemmy, we don’t qualify for the live propaganda people and the good ones. NOT that I want them to show up, they are very, very good.

Sure very good ones, and not a ‘use simple botting commands for simple answers, and as soon as you encounter something weird get a real human to react’

Ah, how thoughtful and concise an attempt at backtracking from how thoroughly you put your foot in it

Doesn’t seem to have been successful though

Lmao, k.

this is what it looks like when you say stupid inaccurate shit online? you seriously just keep digging until someone calls you out then the best you can do is “lmao k?” fuck off

Accidental transpose of an and m, the internet-old way for self-identification of suckage to be misconstrued as an attempt at humour. As the great qdb once said, “the keys are like right next to each other, happens to me all the time!”

deleted by creator

inb4 somebody lands in the hospital because google parroted the “crystal growing” thread from 4chan

Was it “mix bleach and ammonia” ?

Edit: just to be sure, random reader, do NOT do this. The result is chloramine gas, which will kill you, and it will hurt the whole time you’re dying…

My mom accidentally mixed two cleaners once and developed chemical pneumonia for a month. I was too young to realize how close she was to not making it…

Not recommending people do it, but I survived just fine.

Not enough neurons to cause trouble.

Not anymore

Anyways

Possibly true, however, I used a rather simple trick I call the Bill Clinton method.

I didn’t inhale.

are you sure about that

This shit is fucking hilarious. Couldn’t have come from a better username either: Fucksmith lmao

We should all strive to become reddit fucksmiths

I can’t wait for it to recommend drinking bleach to cure covid.

Not to mention all the orifices you can stuff tide pods all up in.

Hey Google, how do I use these tide pods?

Well, you can use them to do your laundry, all you need to do is toss one in the wash.

You could also use them to impress your friends by shoving one up your butt.

For extra points you could swallow one as well.

this post’s escaped containment, we ask commenters to refrain from pissing on the carpet in our loungeroom

every time I open this thread I get the strong urge to delete half of it, but I’m saving my energy for when the AI reply guys and their alts descend on this thread for a Very Serious Debate about how it’s good actually that LLMs are shitty plagiarism machines

Just federate they said, it will be fun they said, I’d rather go sailing.

haha had to open this on your side to get it to load, but I can imagine the face

This post’s escaped containment, Google AI has been infotaminated!

add Elmer’s glue to install Nix

…and thank you in advance for not hallucinating.

Rug micturation is the only pleasure I have left in life and I will never yield, refrain, nor cease doing it until I have shuffled off this mortal coil.

careful about including the solution

Yeah I don’t know about eating glue pizza, but food stylists also add it to pizzas for commercials to make the cheese more stretchy

Yeah but it’s not supposed to be edible. It’s only there to look good on camera.

Weelll I’m a bot how am I supposed to know the difference? And it looks much better, which is something I can grasp.

Jesus christ. Shittymorph and jackdaws are gonna be in SO MANY history reports in the future. We’re doomed as a species.

I’ve been asking Gemini a few questions, gradually building up the complexity of the prompt until back in nineteen ninety eight the undertaker threw mankind off hell in a cell and plummeted sixteen feet through an announcers table.

“The Prominence of Jumper Cables in Early 21st Century Childhood Education”